| Benjamin LeGeyt wrote: |

|

Hello!

let me apologize in advance if this has already been covered somewhere and I missed it.

I have a question about a statement made regarding the ADC clock in the evaluation board v4.0 manual. At the bottom or page 23 there is a mention of jitter between the SRCLK signal and the ADC clock causing a baseline variation in the sampled output of up to a few mV. Is there any more information out there about this? I find this confusing for the following reason: If the DRS output has mostly settled after 28ns and the signal that is being sampled is a DC signal, I don't understand why an aperture jitter in the sampling ADC should cause a voltage error in the measured signal. I already know about the possibility of noise spikes every 32 samples if these clocks are not properly aligned, though I don't know the origin of those spikes. are these two things related?

Many Thanks!

|

Hi Benjamin,

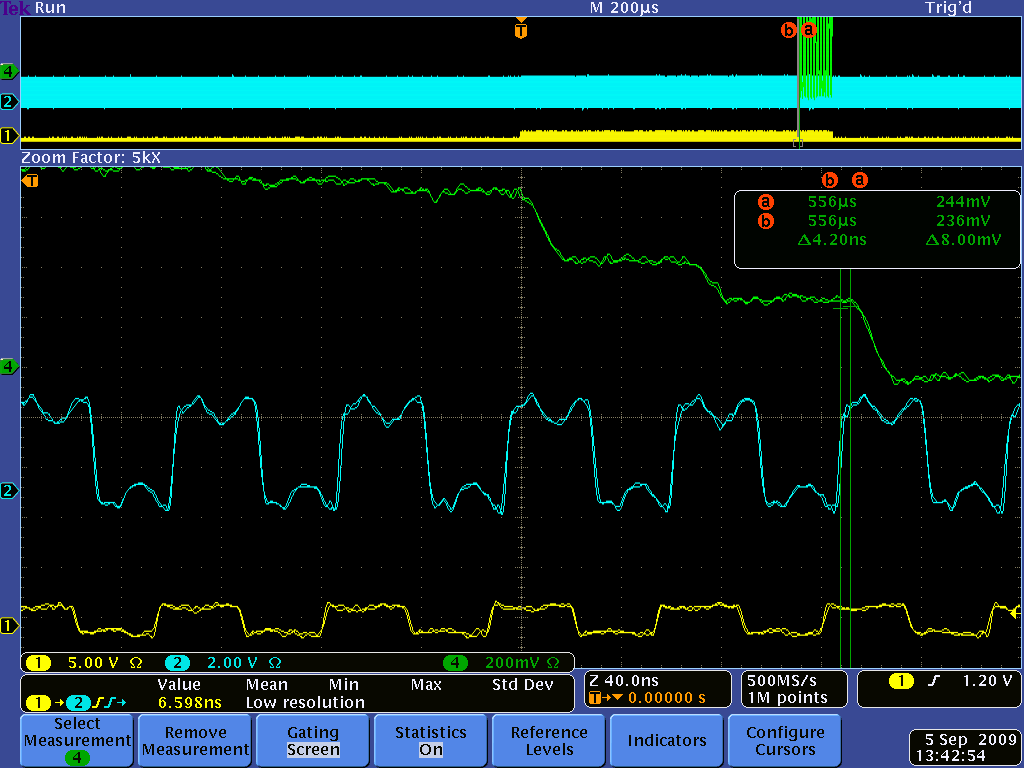

In principle you are right, for a DC signal that should not matter. But in reality the DRS4 output signal is not constant even for a DC signal. When you switch from one sampling cell to another during readout, there is something called "charge injection". This causes the output to change up to several 10 mV. After 28 ns this is mostly settled, but not completely, since the DRS4 output driver has a relatively low bandwidth (~50 MHz). Furthermore, the signal line between the DRS4 and the ADC is not terminated, so you have some reflections going forth and back. In addition, you have some crosstalk from the SRCLK signal. So it's better that you sample on each cycle at exactly the same time. Here you see a plot of that (green: DRS4 output, blue: ADC clock, yellow SRCLK):

|