|

Dear DRS4 team,

I'm experiencing some issues that seem to be isolated to the ASIC, and would like to understand if we are doing something wrong. There are several items to address in the post.

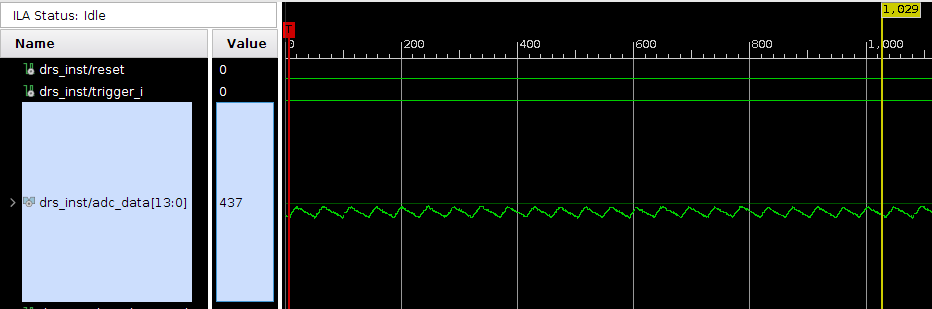

First, I do not think the noise observed is being injected from elsewhere on the board. If I run the DRS in transparent mode, the baseline noise is low, on order 3.5 mV (60 ADU), perhaps radiated from a clock. See below image. The scale is 0 to 1000 ADU with LSB = 6 uV (same AD9245 as eval board.). The DRS is in RUNNING state, I have forced a trigger in the ILA. This is for a single channel, CH0, 1024 cells.

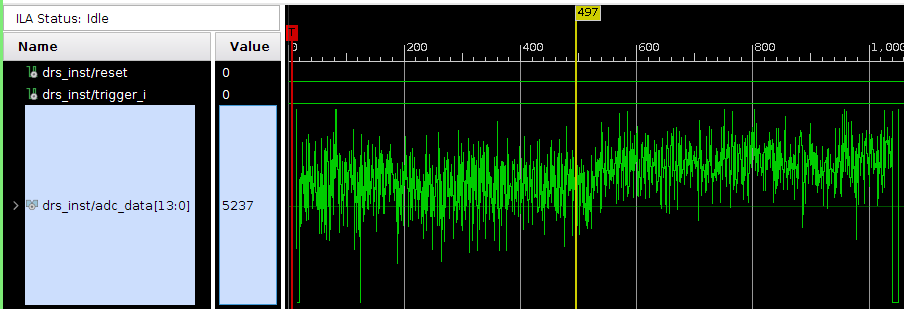

In the next image, I show the waveform obtained from a full readout. This corresponds to ADC_READOUT state, and the plot uses the same 1000 ADU scale. Noise seems around 350 ADU now, many factors worse than before.

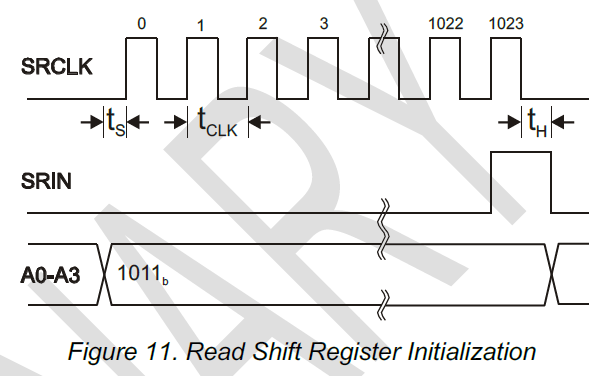

We've spent a lot of time trying to understand what's happening. One area that would be helpful to get some guidance on is the "t_samp" parameter. In Fig. 11 of the data sheet, should there be a t_samp label between t_s and t_clk? It just has arrows there with some width.

In our current firmware I believe R1 is simply one clock after R0 (for both ROI and full readout mode). Would this lead to the added noise observed in muxout?

This leads to the next question on what to actually use for t_samp. In the data sheet, page 4 "Timing Characteristics" it says to use t_samp = t0 + t_clk. Additionally, t0= 10 ns from that table. Fair enough.

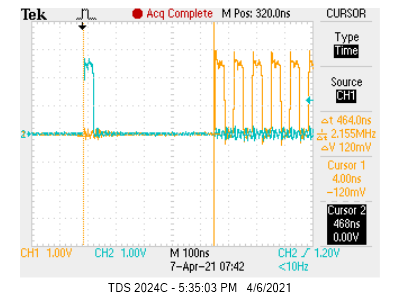

But if I check this against the eval board timing, I see very different values. Here the clock is 15 MHz so t_clk=67 ns (I note another post about this topic https://elog.psi.ch/elogs/DRS4+Forum/713), so I expect t_samp = 77 ns. But in practice it looks like the R0 to R1 delay is ~465 ns? (cyan=RSRLOAD, yellow=SRCLK)

Given this, is t_samp a value that should be tuned by the user?

Best regards,

Sean

|